CZS supported AI projects – session 3

15:15 PM – 16:00 PM

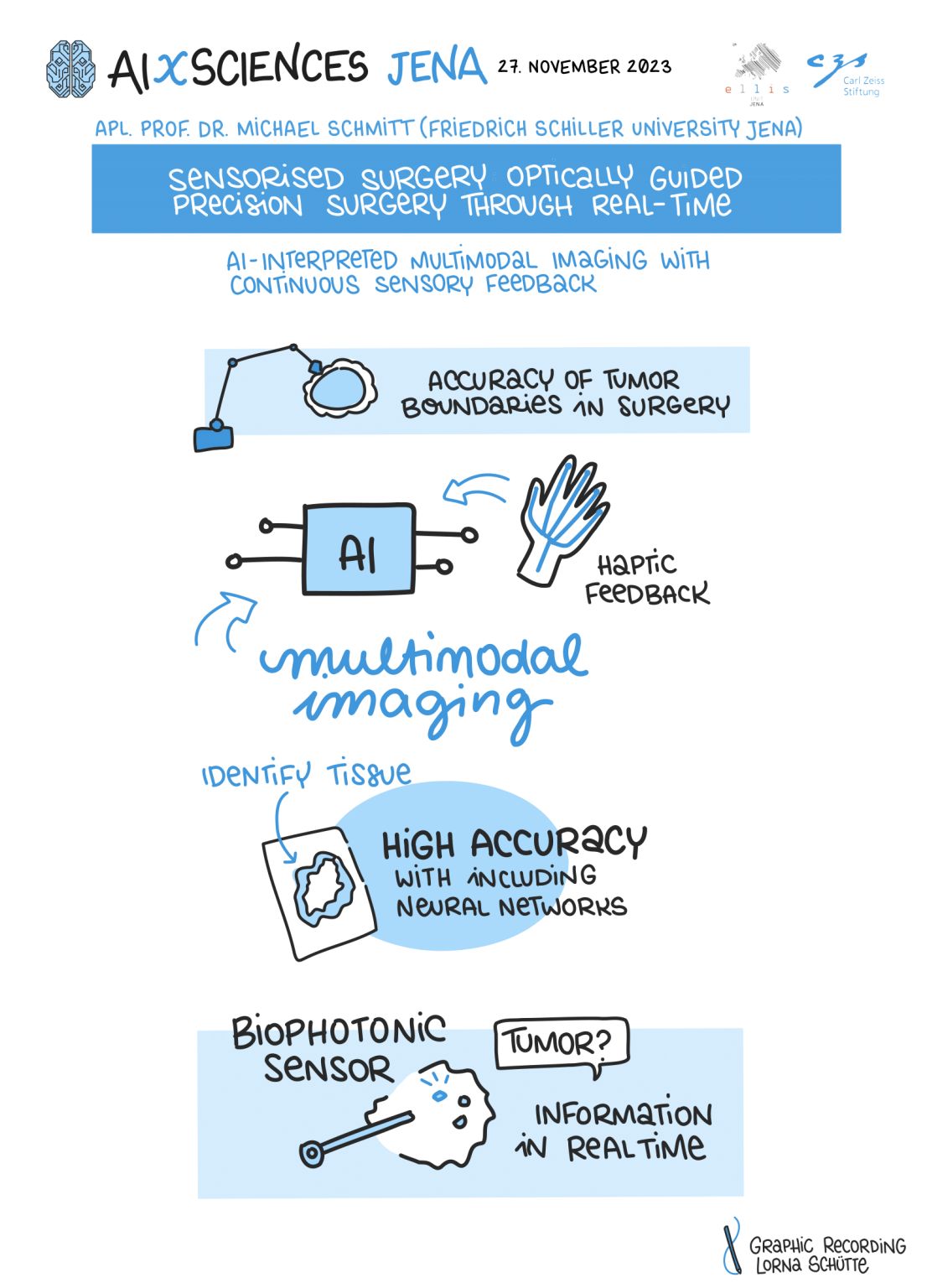

Sensorised surgery: Optically guided precision surgery through real-time AI-interpreted multimodal imaging with continuous sensory feedback

Intraoperative decision-making for tumour resection is based on the surgeon’s experience, supported by macroscopic, endoscopic, microscopic or robotic and navigation-assisted examination of the tumour. However, this approach does not allow for a precise definition of the tumour borders and can lead to incomplete tumour resection and thus poor survival of the patient. Intraoperative navigation is based on preoperative imaging. Due to the continuously changing situation caused by the operation, the data is increasingly outdated and thus inaccurate. Preliminary work by the project partners shows that multimodal marker-free imaging is suitable for accurately determining tumour boundaries. This project aims to use multimodal imaging and artificial intelligence (AI)-based real-time analysis to continuously record the current tumour boundary in the operating theatre and visualise it to the surgeons, and also to use the analysis to provide haptic feedback so that the surgeons can immediately use the information to make decisions.

In this complex project, sensorised surgery means two things: on the one hand, sensors are used for more precise tissue analysis and, on the other hand, for optimised information acquisition and transmission for surgical tactics. In this way, sensorised surgery can not only realise personalised surgery precisely for the patient to be operated on, but above all increase the rate of complete tumour resections while sparing healthy tissue as much as possible. The realisation of an intuitive sensory interaction between machine and surgeon as a breakthrough will increase the acceptance of such new life sciences technology of personalised medicine by surgeon and patient, which in turn will accelerate the transfer into everyday care. The challenges lie in the reduction of potential model bias due to low training data and the provision of information about the uncertainty in the analysis, which should be visualised to the surgeons in an appropriate way. Real-time operation also requires model compression without compromising the quality of the results.

Speaker and Alliance Partner

Michael Schmitt, received his Ph.D. in chemistry from the University of Würzburg in 1998. From 1999 to 2000 he went for postgraduate studies to the Steacie Institute for Molecular Sciences at the National Research Council of Canada. He subsequently joined the group of Prof. Dr. W. Kiefer at the University of Würzburg, where he finished his habilitation in 2004. Since March 2004 he has been a research associate in the group of Prof. Dr. J. Popp at the Institute of Physical Chemistry at the Friedrich-Schiller-Universität Jena. In 2010 he was promoted to the rank of an associate Professor (Außerplanmäßiger Professor) at the Friedrich-Schiller University Jena. His main research interests are focused on Raman spectroscopy, non-linear spectroscopy and non-linear multimodal imaging for biomedical and material research. He has published more than 270 publications in peer reviewed journals. He is assistant editor of Journal of Biophotonics. In 2018 he received the Kaiser-Friedrich-Forschungspreis.

Project leader

Orlando Guntinas-Lichius is a university professor at the FSU Jena and the clinic director of the Department of Otorhinolaryngology at Jena University Hospital. His research encompasses salivary gland diseases, head and neck tumors, new optical and biophotonic diagnostics, facial nerve regeneration, speech understanding in hearing loss, chronic tinnitus, and the digitization of medicine, particularly in Otorhinolaryngology. He serves as the coordinator for the BMBF project WeCaRe, aiming to enhance individual patient benefits by ensuring or improving local care for differently mobile patients in structurally weak regions. In many of his works, he integrates medical basic research with the application of modern methods in digitization and AI, collaborating particularly with partners in optics/photonics and computer science. In 2023, the WeCaRe consortium secured the 2nd place at the Thuringian Digital Prize.

Principal Investigator